Insights & Resources

Stay informed with the latest in AI infrastructure, GPU computing, and enterprise server technology.

AI Model Compression: Reducing Model Size to Maximize Inference ROI

Advanced compression techniques enable 80-95% size reductions while maintaining 95%+ accuracy. Fit massive workloads onto fewer GPUs and drastically reduce inference costs.

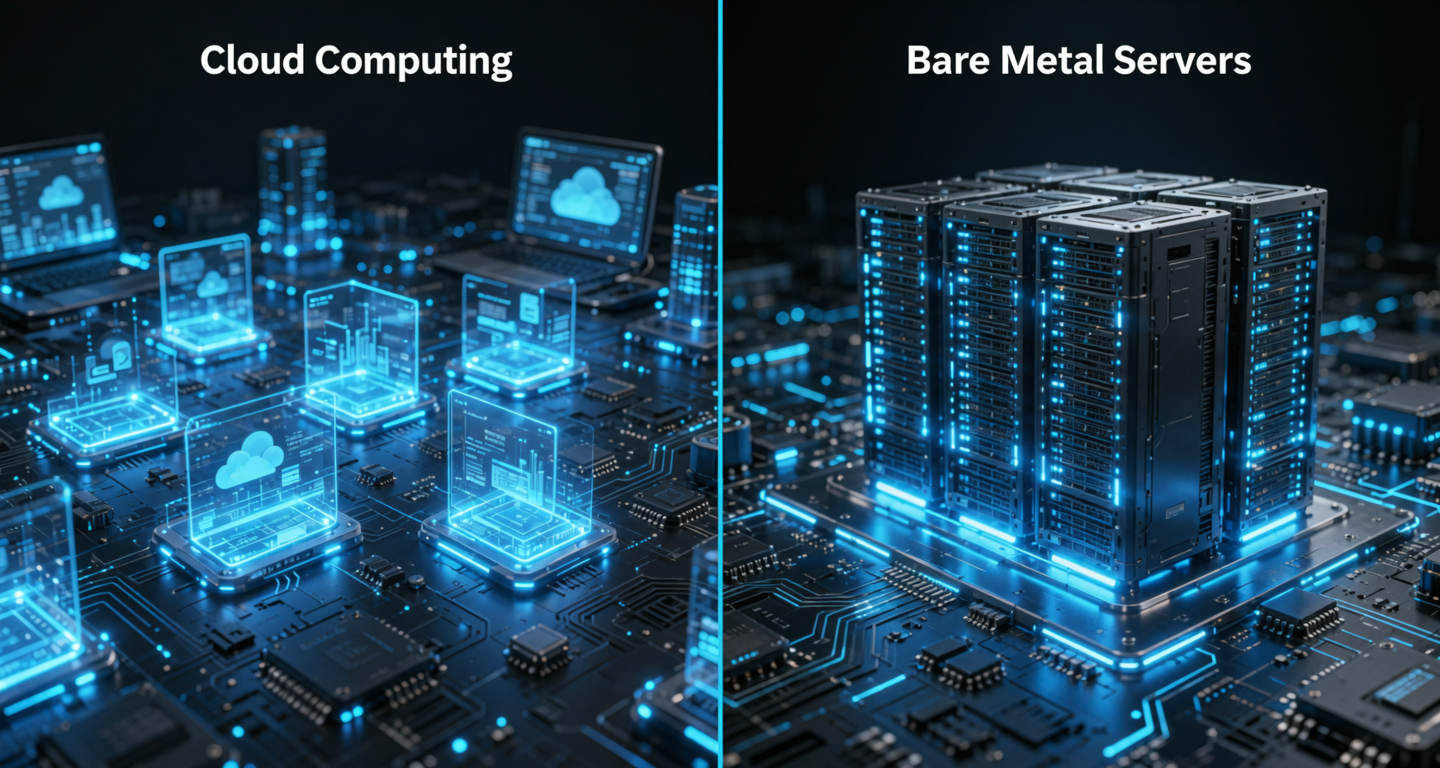

The Hidden Costs of Public Cloud AI: Why Bare-Metal Delivers 40% Higher ROI

Virtualization taxes, data egress fees, and noisy neighbors erode your margins. Discover why enterprises migrating to bare-metal observe up to 40% ROI improvement.

PCIe vs. NVLink: Understanding Multi-GPU Communication for Trillion-Parameter Models

When scaling to trillion-parameter models, the interconnect becomes the bottleneck. Learn why NVLink's 900 GB/s bandwidth crushes PCIe's 128 GB/s for distributed training.

Escaping Vendor Lock-in: Open Source LLMs on Private Infrastructure vs. Proprietary APIs

Proprietary APIs create financial unpredictability and data privacy risks at scale. Open-source models on private hardware let you own AI as a core corporate asset.

Computer Vision in Manufacturing: Scaling Defect Detection with Localized GPU Nodes

Centralized cloud fails on the factory floor. Localized GPU nodes deliver sub-50ms inference for real-time defect detection without bandwidth costs or latency risks.