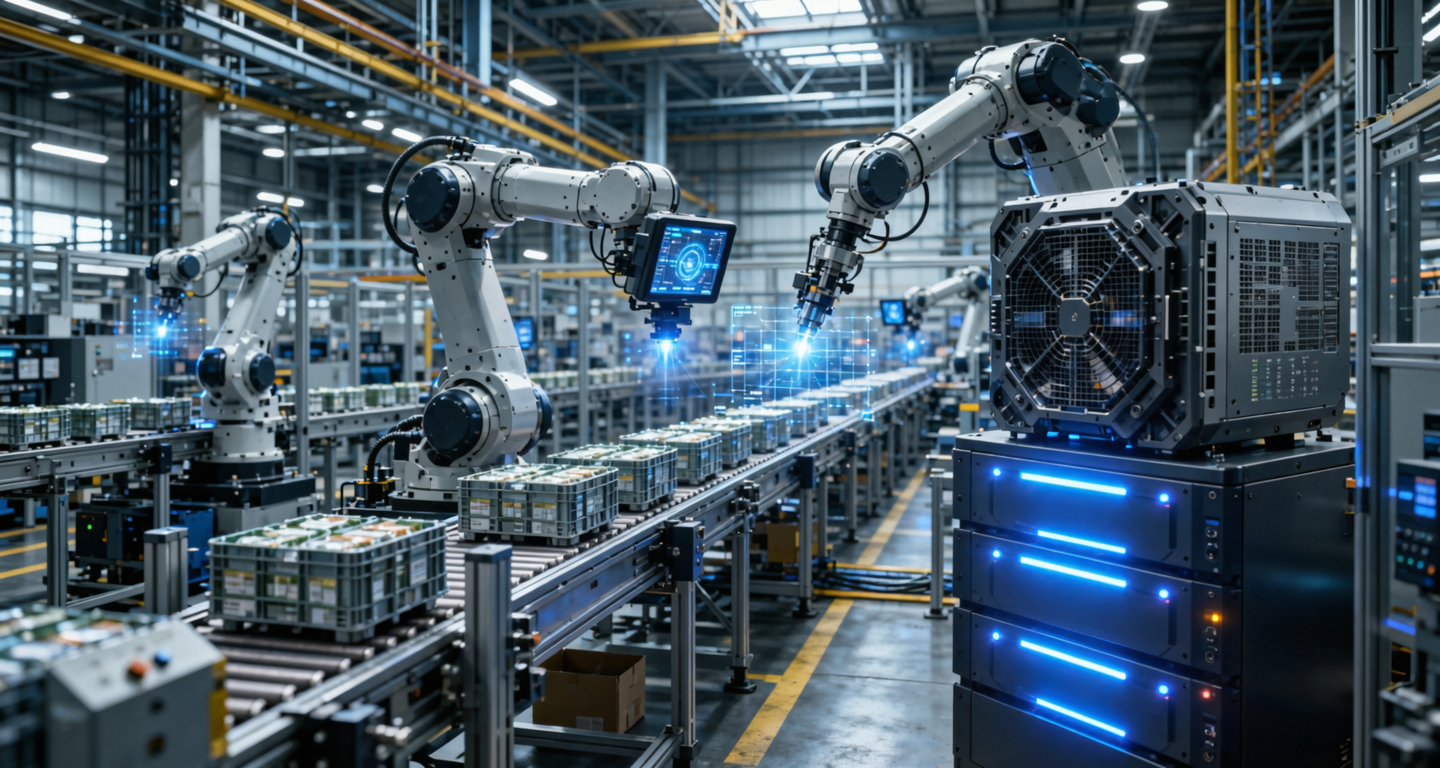

As global manufacturing transitions toward Industry 4.0, deep learning-based Computer Vision has become the gold standard for Automated Optical Inspection. Modern AI models can detect microscopic surface anomalies, misalignment, and structural defects with accuracy that far surpasses human operators. However, as Chief Information Officers attempt to scale these pilot programs across dozens of production lines, they encounter a severe architectural roadblock: the centralized public cloud is fundamentally incompatible with the physical realities of high-speed manufacturing.

To deploy real-time defect detection at an industrial scale, enterprises must pivot their infrastructure strategy from cloud-dependent processing to localized, high-performance GPU nodes.

The Physics of Latency in High-Speed Production

The most unforgiving metric on a factory floor is time. Modern assembly lines operate at extreme velocities, often requiring quality control decisions to be made in under 50 milliseconds. If an AI model takes too long to infer a defect, the flawed component has already moved past the mechanical rejection actuator, resulting in false negatives and compromised batch integrity.

Relying on a centralized cloud architecture introduces unpredictable network round-trip times. Routing high-definition video feeds from a facility in Southeast Asia to a hyperscaler data center, processing the frame, and returning the actuation signal often exceeds 100 to 200 milliseconds. Furthermore, public internet routing is susceptible to jitter. Localized bare-metal GPU nodes placed directly on the factory floor—or in a nearby regional data center—eliminate this transit phase. By keeping the compute physically adjacent to the data source, manufacturers achieve deterministic, ultra-low latency, ensuring the AI inference strictly aligns with the mechanical speed of the conveyor belt.

The Bandwidth Trap of Video Inference

Unlike text-based Large Language Models, Computer Vision generates an astronomical volume of unstructured data. A single industrial 4K camera operating at 60 frames per second can generate gigabytes of data per minute. A standard factory floor may easily deploy 50 to 100 such cameras.

Attempting to stream this continuous volume of uncompressed video out of the facility to a public cloud immediately saturates the corporate network uplink. Even if the bandwidth is available, the continuous data transfer costs (egress and ingress fees) levied by major cloud providers will instantly destroy the project's Return on Investment. Localized GPU nodes fundamentally restructure this data pipeline. The heavy lifting—running the TensorRT inference—happens on-site. The localized hardware discards the raw video immediately after processing and only transmits lightweight metadata (such as defect logs, timestamps, and isolated anomaly images) to the central corporate database.

Operational Resilience and Data Sovereignty

A manufacturing plant cannot halt production simply because an Internet Service Provider (ISP) experiences a routing issue. Cloud-dependent CV systems introduce a single point of failure: if the Wide Area Network goes down, the factory goes blind. Localized GPU clusters provide critical operational resilience. Because the inference engine runs entirely on local area networks, the defect detection system remains 100% operational even in an air-gapped scenario.

Additionally, production lines capture highly sensitive intellectual property. Continuous video feeds of unreleased product designs, proprietary assembly techniques, and exact yield rates are prime targets for industrial espionage. Sending this data to a multi-tenant cloud introduces severe security vulnerabilities. Localized computing ensures that the raw data never crosses the corporate firewall, perfectly aligning with strict data sovereignty and compliance mandates.

The Strategic Imperative

Scaling AI in manufacturing is not a software challenge; it is an infrastructure challenge. Treating a factory floor like a standard web application leads to paralyzing latency, exploding bandwidth bills, and fragile operations. By investing in dedicated, localized GPU compute nodes, industrial enterprises transform computer vision from an expensive cloud experiment into a robust, high-throughput component of their core manufacturing process.

Bring AI to the Factory Floor

BRIGHTCHIP provides localized, high-performance GPU nodes engineered for real-time computer vision workloads. Eliminate cloud latency and bandwidth costs—deploy deterministic AI inference where it matters most.